GPU Resources

The HPC cluster has NVIDIA L40S GPUs and an NVIDIA A30 spread across various hosts and resources.

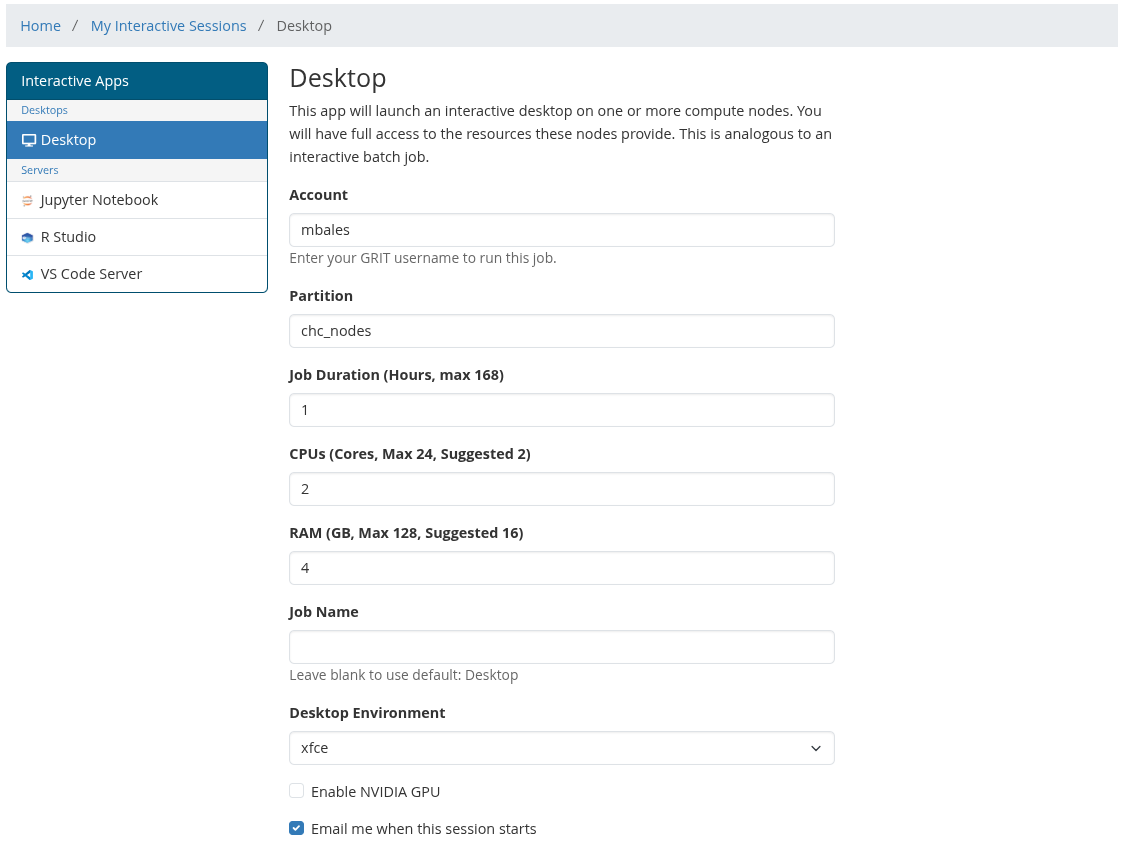

Interactive Apps

To access GPU resources via the Open OnDemand web UI, use the GPU options at the bottom of the interactive session form.

There are two GPU request modes:

- Full GPU — reserves an entire GPU for your job. Use this for large training jobs, GPU-heavy applications, or jobs that need most or all GPU memory.

- Shared GPU shards — requests a portion of a GPU. Use this for light interactive GPU work, testing, notebooks, MATLAB GPU checks, or jobs that do not need an entire GPU.

GPU shards allow multiple jobs to share the same physical GPU. Shards are scheduled by Slurm, but they are not the same as NVIDIA MIG and do not provide hard GPU memory isolation. If your job may use a large amount of GPU memory, request a full GPU instead.

SLURM CLI

GPU resources can also be accessed via the SLURM CLI. Below are some examples.

Request a full exclusive GPU:

#!/bin/bash

#SBATCH -J gpu-l40s-test

#SBATCH -p grit_nodes

#SBATCH --gres=gpu:1

#SBATCH --cpus-per-task=8

#SBATCH --mem=32G

#SBATCH -t 01:00:00

nvidia-smi

<your command here>Full GPU one-liner:

srun -p grit_nodes --gres=gpu:1 --cpus-per-task=4 --mem=16G --pty <your command here>#!/bin/bash

#SBATCH -J gpu-shard-test

#SBATCH -p grit_nodes

#SBATCH --gres=shard:4

#SBATCH --cpus-per-task=4

#SBATCH --mem=16G

#SBATCH -t 01:00:00

nvidia-smi

<your command here>srun -p grit_nodes --gres=shard:4 --cpus-per-task=4 --mem=16G --pty <your command here>Notes

GPU resources work differently from CPU and RAM resources. A request such as --gres=gpu:1 reserves a full GPU for the job. A request such as --gres=shard:4 requests shared GPU capacity and allows multiple jobs to use the same physical GPU.

Use --gres=gpu:1 when you need exclusive access to a GPU or expect heavy GPU memory usage. Use --gres=shard:<number> for lighter workloads that can share a GPU with other jobs.

You can check which GPU Slurm exposed to your job with:

echo $CUDA_VISIBLE_DEVICES

nvidia-smi